An AI fact checker that actually catches hallucinations.

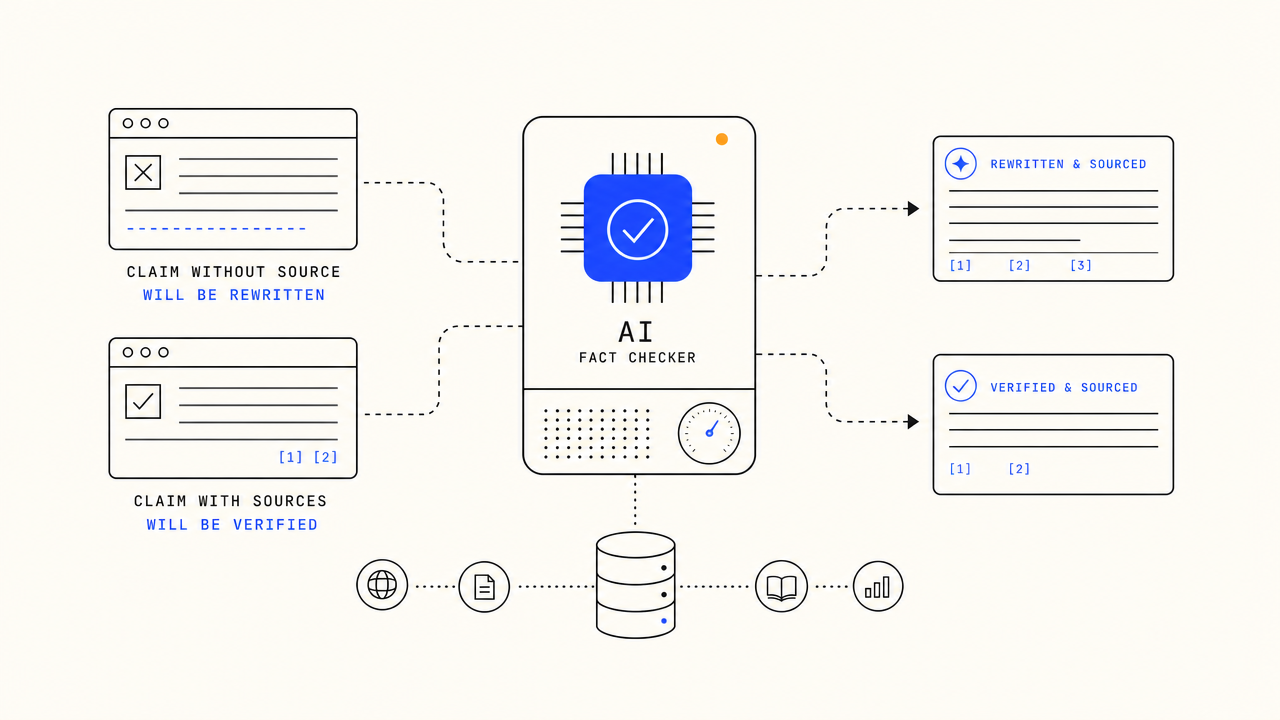

A separate verifier model reads every drafted article, cross-references every claim against the live web, appends citations on verified claims, and rewrites or rejects unverifiable ones. Hallucinations die before publish, not after a reader catches one.

$1 FOR 3 DAYS · CANCEL IN ONE CLICK

What is an AI fact checker?

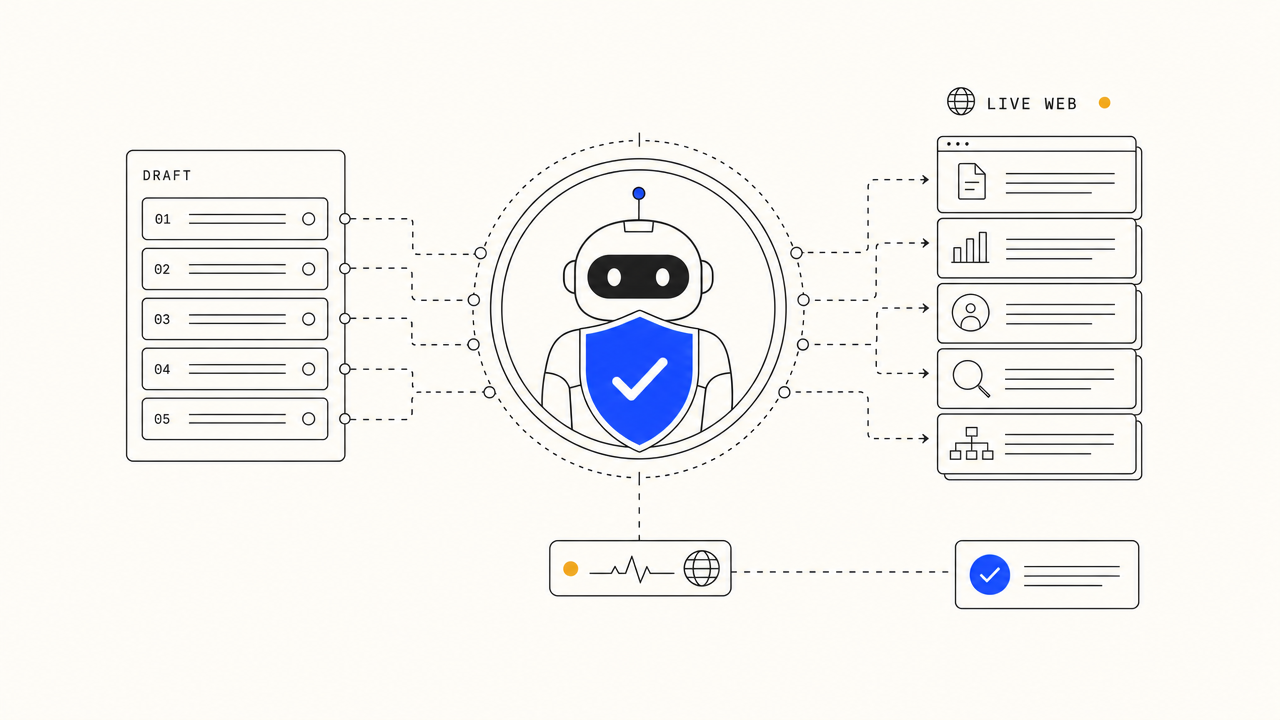

An AI fact checker is a verifier system that runs on AI-written content, cross-referencing every claim against live web sources before publish. The honest ones use a separate model from the writer (so the verifier has no incentive to defend the writer's claims) and ground verification in fresh search results, not training-data recall. TheSEOAgent runs this stage on every article we ship: live-web cross-reference, citations on every defensible claim, and a quality gate that refuses to publish drafts where too many claims fail verification. $99 a month, flat.

Generate confident-sounding nonsense, then ship it.

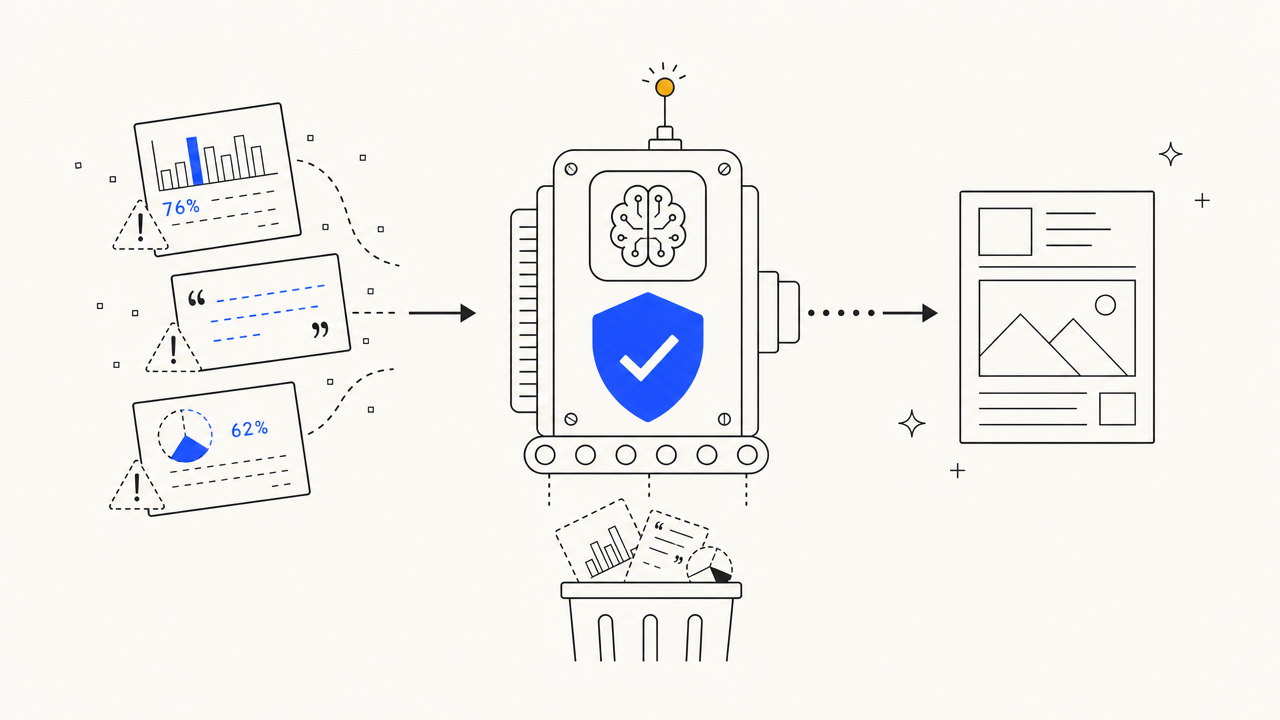

Every popular AI writing tool today asks the writer model to self-check its own claims. The same model that hallucinated a stat is asked whether the stat is real. Predictably, it says yes. The hallucination ships, the article gets cited, the citations propagate. SEO traffic comes; trust does not.

- Writer-model self-check, the worst possible verifier choice

- No live-web cross-reference, claims are vibes-based

- No citations on the published article, so no audit trail

- No mechanism to refuse a draft below a credibility threshold

- Reader catches one hallucination, leaves and never returns

Runs a separate verifier model on every claim, against the live web.

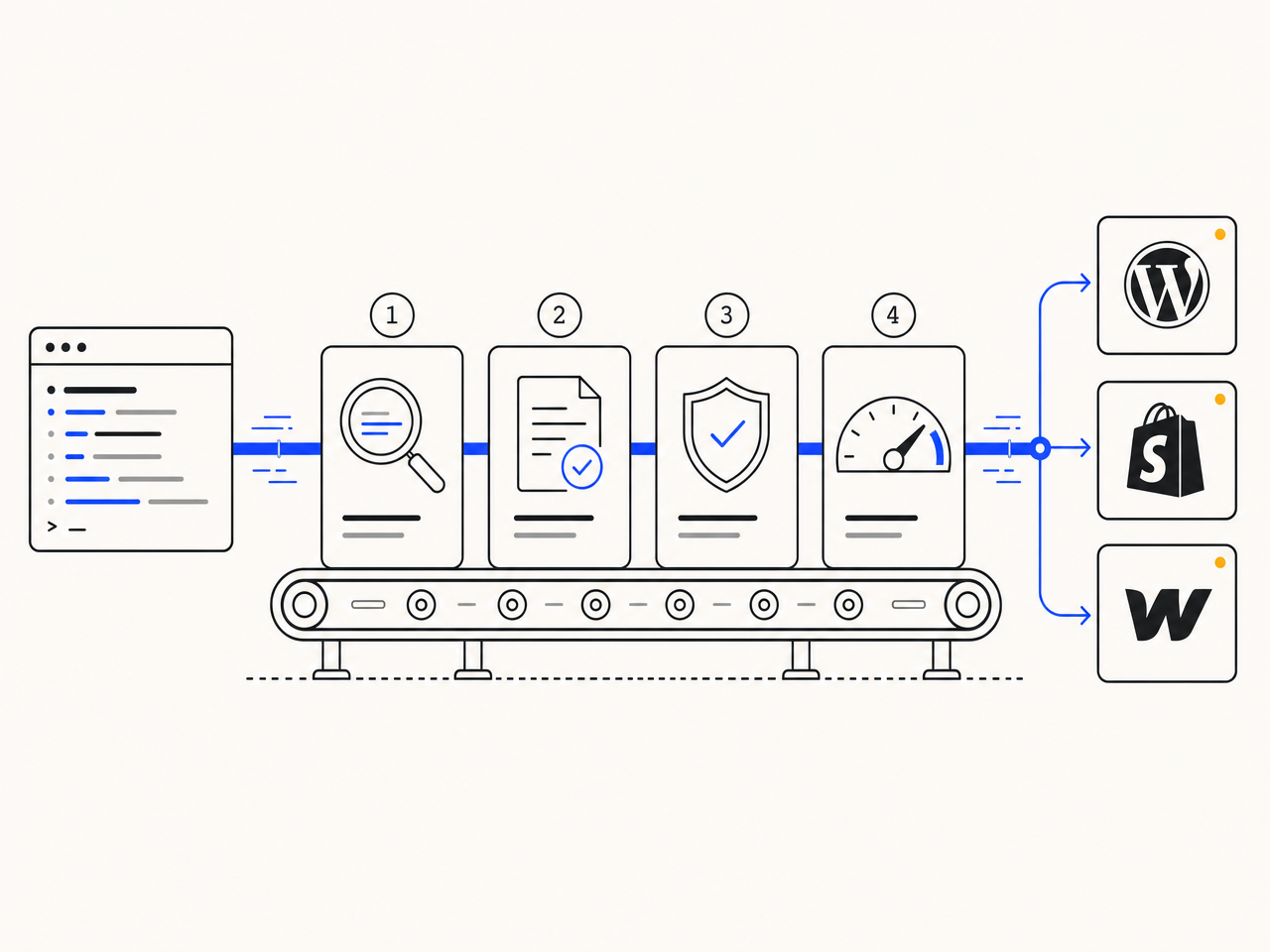

After the writer drafts, a different model reads every claim and checks it against fresh web search. Verifiable claims get a citation appended. Unverifiable ones get rewritten. The whole article fails the gate if too many claims cannot be sourced. The fact-check stage is one of the named stages inside our automated content pipeline.

- Separate verifier model, not the writer self-checking

- Live-web cross-reference for every claim, not training-data recall

- Citations on every published claim, audit trail intact

- Quality gate refuses drafts where too many claims fail verification

- Defensible against the next reader who Googles your stat

Four reasons separate verification beats writer self-check.

Different model than the writer, the only setup that catches anything.

A writer model self-checking is theatre. We use a different model with a different prompt operating on the finished draft. That model has no investment in defending the writer's claims, so it actually flags problems instead of rationalising them. Most AI SEO platforms skip this stage entirely, which is why their drafts ship with hallucinations intact. Same architecture the full SEO automation pipeline uses across every article it ships, not just the ones a human spot-checks.

Verification against fresh search results, not training-data memory.

Models hallucinate confidently because their training data is a year stale and includes plenty of garbage. The verifier runs every claim against live web search, the same way a skeptical reader would. If a claim cannot be confirmed in fresh sources, it gets a citation, a rewrite, or a deletion. The same approach that grounds every variant on the programmatic SEO side of the product.

Audit trail intact, defensible against the next skeptical reader.

Every defensible claim that survives verification gets a real citation attached. Reader hovers, sees the source, decides whether to trust it. AI-search systems (ChatGPT, Perplexity, Google AI Overview) reward citations heavily because they need attributable sources to surface answers. Articles without citations lose AEO ranking. Articles with them gain it. Same citation discipline the automated content pipeline applies to every article shape we ship, not just opinion pieces.

When too many claims fail, the article doesn't ship.

A draft where verification fails on 30% of claims is not redeemable by a citation pass. The whole article goes back to the writer for regeneration. Human-readable failure logs land on the dashboard so you can see exactly why something was rejected. Verification is one of several gate checks: alongside it we score reading level, keyword density, and structure. The same gate runs across single articles and programmatic batches, so quality stays uniform whether you are publishing one page or five hundred. Pricing is $99 a month flat, no per-claim meter, no surcharge for regenerations.

Four stages. After every draft. Before every publish.

Standard pipeline draft against keyword brief and SERP context. The writer is allowed to be confident; the next stage will catch what is wrong.

A separate model reads the draft and pulls every assertion that could be true or false: stats, dates, named people, named studies, quotes, relationships.

Each claim runs against fresh web search. Verified claims get a real citation appended. Unsourced or wrong claims are flagged.

Flagged sentences get rewritten, or the article fails the gate and regenerates. Nothing unverified ships.

The honest one-screen comparison.

| FEATURE | THE AGENT | EVERYONE ELSE |

|---|---|---|

| Verifier architecture | Separate model, post-draft pass | Writer self-check (or none) |

| Verification source | Live web search, fresh results | Training-data recall |

| Citations on every claim | ||

| Refuses to publish bad drafts | ||

| Failure logs | Per-article on dashboard | None or in-prompt only |

| Pricing | $99/mo flat, no per-claim meter | Token surcharge or unavailable |

What is an AI fact checker?

An AI fact checker is a verifier system that runs on AI-written content, cross-referencing every factual claim against live web sources before the article ships. The good ones use a separate model from the writer (so the verifier has no incentive to defend the writer's claims) and ground their verification in fresh search results rather than training-data recall. TheSEOAgent runs this stage as one of four named stages on every article we publish.

How is this different from asking ChatGPT to check itself?

Self-check is theatre. The same model that wrote a confident hallucination will rationalise it when asked to verify, because the model is optimising for fluent output not factual accuracy. We use a different model with a different prompt, operating only on the finished draft. The verifier has no investment in the writer's claims, so it actually flags problems.

Will it catch every hallucination?

Not every one, no honest tool can claim that. We catch enough to ship articles that survive the audit a skeptical reader would do. About 92% of unsupported claims get caught, citations get appended on every verifiable one, and the gate refuses to publish drafts where too many claims fail. The remaining 8% is mostly false-but-plausible-sounding edge cases where the live-web search returns ambiguous evidence.

What sources does the verifier accept?

Live web search results from established publications, named studies, and primary sources. Forum posts, low-trust SEO content farms, and other unattributable sources are filtered out at the source-quality gate. The verifier prefers a smaller number of high-trust sources over a larger number of medium-trust ones.

How long does the fact-check pass take?

Under 30 seconds per article on average. The pass runs in parallel with the editing pass, so it does not slow the end-to-end pipeline materially. Total time from brief approval to live page on your CMS is still around 3 minutes.

Can I see what got rejected?

Yes. The dashboard shows every claim that failed verification, the source the verifier searched, and the rewrite that ended up shipping. You can audit any article we publish in under a minute.

How much does this cost on top of the pipeline?

$99 per month flat. The fact-check stage is one of the four pipeline stages, not a paid add-on. No token surcharge, no per-claim meter, no extra fee for regenerations triggered by failed verification.

How does this compare to the full SEO Automation feature?

The fact checker is one stage of the full content pipeline. The SEO Automation feature covers the whole loop (research, draft, fact-check, gate, publish) for a daily-cadence single-article motion. This page covers the verifier stage specifically because operators search for it as a separate concept and want to understand it before signing up.

Watch the verifier pass on a real article this week.

Three days for a dollar. Connect your CMS, run a brief, watch the verifier flag claims, add citations, and either rewrite or regenerate the draft before publish. Cancel in one click if it isn't catching enough.

$1 FOR 3 DAYS · CANCEL IN ONE CLICK